Sharing notes from my ongoing learning journey — what I build, break and understand along the way.

Building a GitOps-Based Kubernetes Infrastructure | PactNote

From Bare Metal to GitOps: Architecting a Modern Kubernetes Cluster for PactNote

Behind every great software project lies an invisible hero: Infrastructure. When starting a new project, many of us tend to open our code editors right away and focus solely on developing the application’s features. However, as the project scales, this approach inevitably brings about scaling issues, security vulnerabilities, and the classic “it works on my machine” syndrome.

For my brand-new project, PactNote—the details and vision of which I will share with you in the future—I decided to take a completely different path. Before writing a single line of backend code for the application, I built a secure, self-healing, and 100% autonomous cloud infrastructure for it to live on. In this post, I will explain step-by-step how I laid the foundation for PactNote using the GitOps philosophy (one of the gold standards of the modern DevOps world), which technologies I chose and why, and the engineering challenges I overcame along the way.

1. The Philosophy: Why GitOps? Why Did I Put an End to Manual Intervention?

In traditional server management, deploying an application meant connecting to the server via SSH, copying files, restarting services, and praying that nothing breaks. That approach is now history.

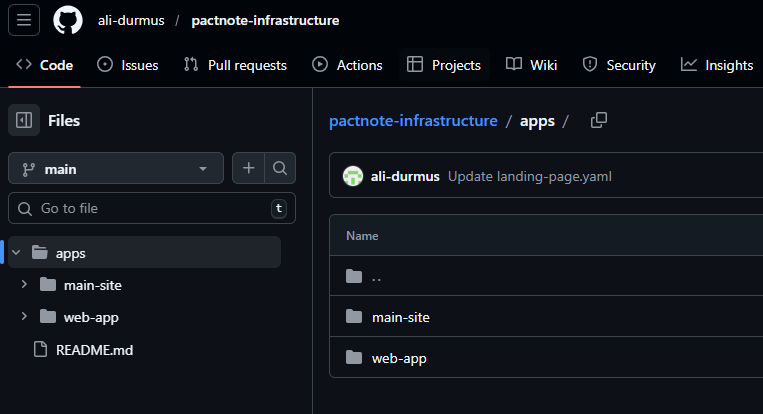

For PactNote, I adopted the “Single Source of Truth” principle. Every single configuration, routing rule, and application version in my infrastructure resides as code (Infrastructure as Code) in a GitHub repository. Touching the server manually is strictly forbidden! When I update a file on GitHub, an agent inside my cluster detects this change and syncs the server to match the new code in seconds.

As you can see in the visual above, all configurations for ArgoCD, Traefik, Grafana, and my web application consist entirely of YAML files. If the server crashes or if I need to migrate to a new one, getting the entire system back up and running will only take a few minutes.

2. Laying the Foundation: K3s (Lightweight Kubernetes) on Bare Metal

While managed Kubernetes services (EKS, GKE) provided by cloud providers (AWS, GCP, Azure) are fantastic, they can inflate costs very quickly when you are bootstrapping your own project. That’s why I decided to rent a robust bare-metal server from Hetzner and build my own Kubernetes cluster from scratch for PactNote.

Instead of standard Kubernetes (K8s) as my orchestration tool, I opted for K3s, developed by Rancher. Why? Because K3s is stripped of all the bloat of original Kubernetes (legacy cloud add-ons, unnecessary storage drivers) and is packaged as a single binary designed for IoT and Edge computing. It consumes significantly less RAM, installs in seconds, and offers a fully compatible (CNCF-certified) Kubernetes experience.

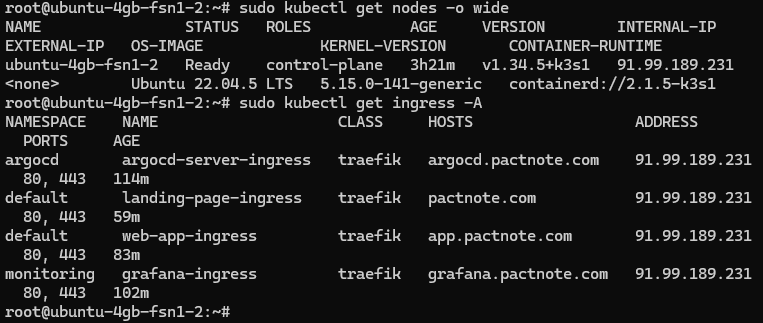

In this terminal output, you can see my server in the “Ready” state and my Ingress controller (Traefik), which manages the traffic network, up and running. I now have an operating system that will manage my containers, restart crashed ones, and distribute resources efficiently.

3. Traffic Management and Security: The Traefik & Cert-Manager Combo

No matter how great your applications are, they are useless if you cannot correctly route incoming requests (HTTP/HTTPS) from the outside world to the right container. The Traefik Ingress Controller, which comes by default with K3s, acts as our security guard and router at the front door.

Instead of traditional Nginx or Apache configurations, I utilized Traefik’s dynamic routing capabilities. When I create a new YAML file for an application and declare, “Send incoming traffic from app.pactnote.com to Service X,” Traefik detects this instantly and begins routing the traffic without any downtime.

On the security front, I set up the Let’s Encrypt and cert-manager duo. Getting and renewing SSL certificates is no longer a chore for me. The moment a new domain (e.g., grafana.pactnote.com) is added, the system automatically talks to Let’s Encrypt, passes the validation tests (HTTP-01 challenge), and binds the SSL certificate to my application. This entire process happens in the background with zero human intervention.

4. The Autonomous Orchestrator: Continuous Deployment with ArgoCD

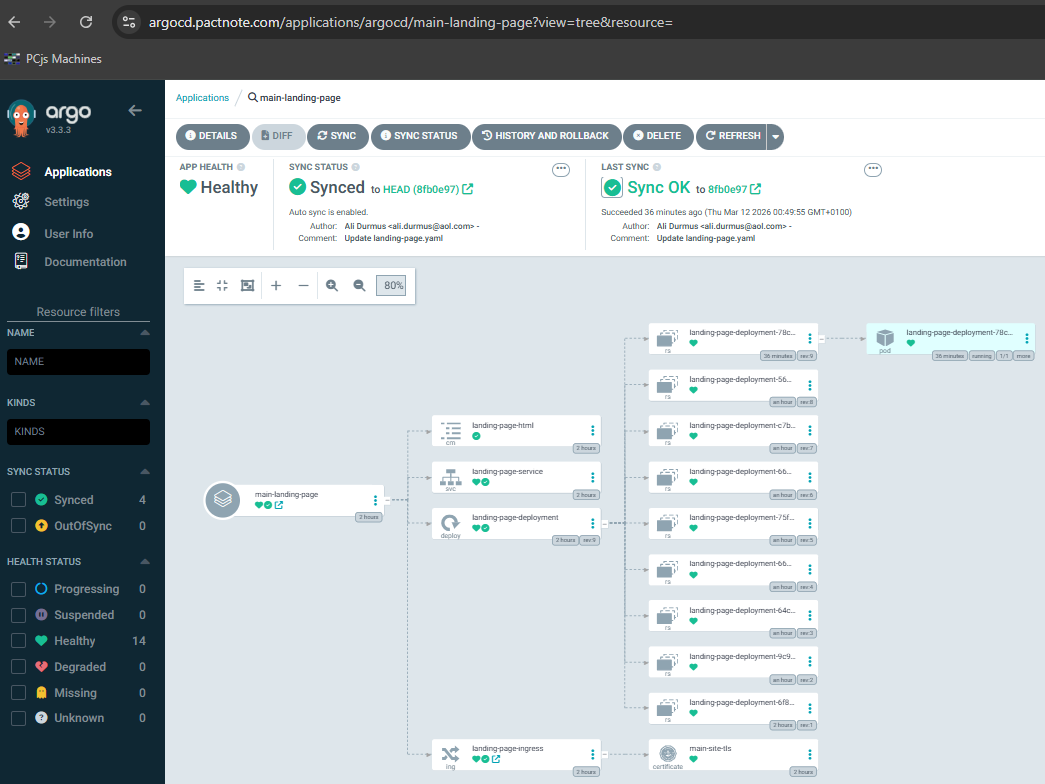

Now we reach the beating heart of the system. The tool that brings the GitOps philosophy to life: ArgoCD.

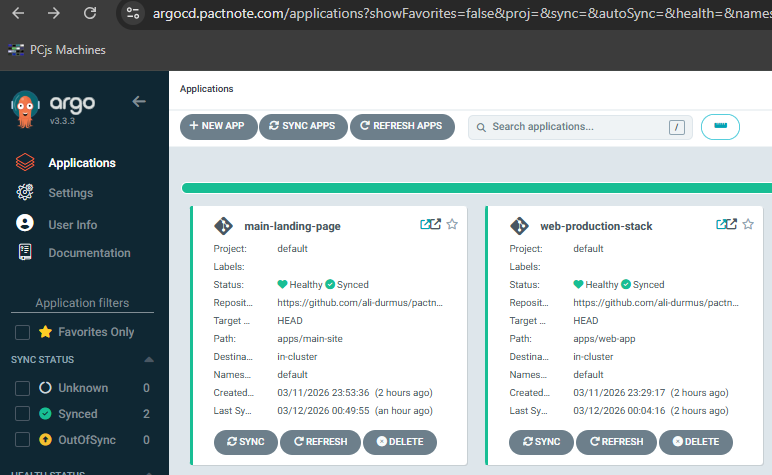

ArgoCD is an agent that runs inside my Kubernetes cluster, keeping its eyes constantly fixed on my GitHub repository. Its working mechanism is incredibly simple yet highly powerful: It monitors the difference between the “Desired State” (the YAML codes on GitHub) and the “Live State” (the system currently running on the server). If I bump the Nginx version from 1.24 to 1.25 on GitHub, ArgoCD immediately notices this “Out of Sync” drift and updates the Nginx on the server to the new version.

This tree structure is essentially the anatomy of the architecture. From ConfigMaps to Services, Deployments to Pods, I can instantly see how all resources are interconnected and verify that they are all running in a “Healthy” state. If any pod crashes, ArgoCD instantly recreates it thanks to its “Self-Heal” feature.

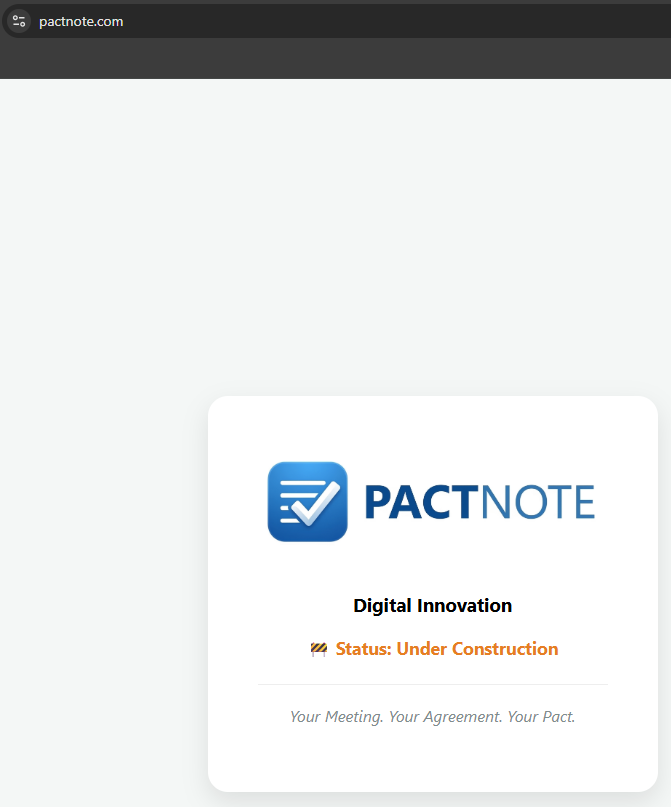

5. The First Output: The Architecture of the “Under Construction” Page

After setting up the infrastructure, greeting visitors with a blank error screen would have been unprofessional. I decided to design a modern “Under Construction” page that reflects the PactNote brand. However, I didn’t use an external web server (like Apache) or legacy tools like cPanel to publish this page. To test the power of the infrastructure, I embedded my static HTML file directly into a Kubernetes ConfigMap object!

This engineering approach works as follows:

- The HTML and CSS codes I wrote are stored inside a ConfigMap.

- A simple Nginx pod (Alpine Linux-based, ultra-lightweight) is spun up.

- This Nginx pod mounts the HTML from the ConfigMap into its own file system (

/usr/share/nginx/html) using the “Volume Mount” method. - The Traefik Ingress routes incoming traffic for

pactnote.comto this Nginx pod.

Because of this, even when I want to fix a typo on the “Under Construction” page, I never SSH into the server. I simply update the HTML code on GitHub, ArgoCD pulls the change, and the live site updates in a matter of seconds.

The live result: Zero manual intervention, 100% code-managed, SSL-secured, and a landing page that reflects the brand’s prestige.

6. The Observation Tower: Metric Monitoring with Prometheus and Grafana

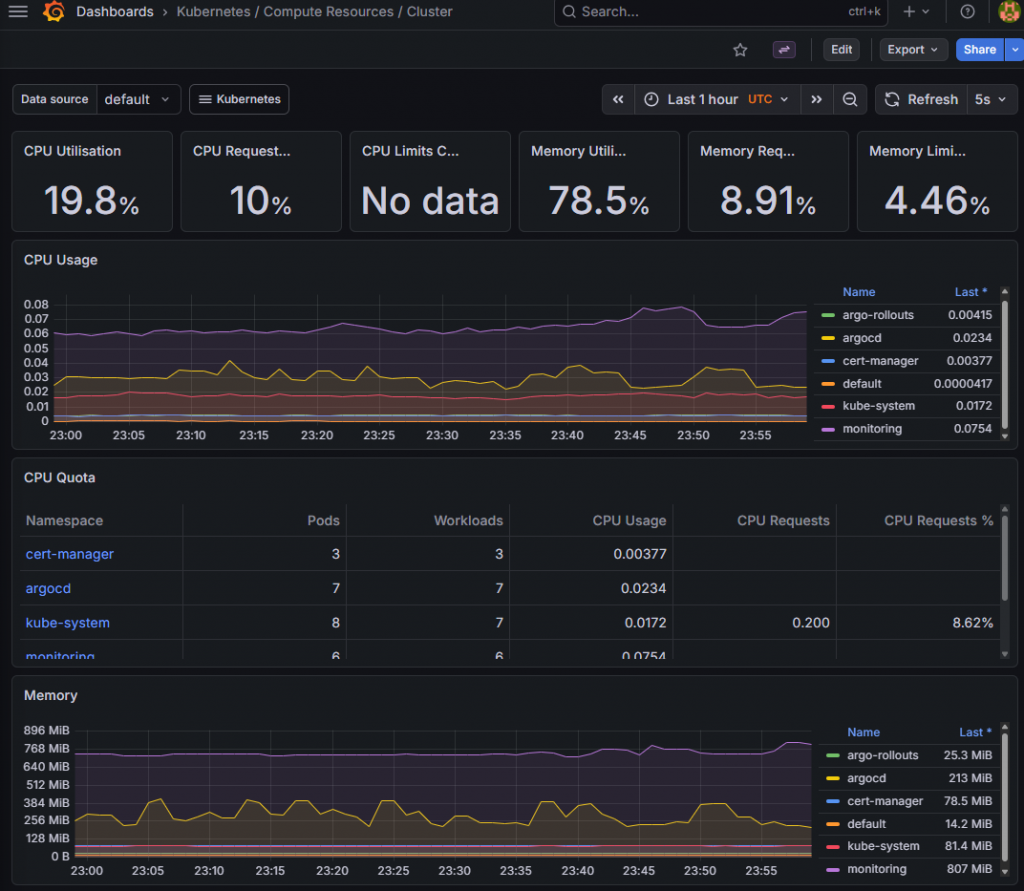

“You can’t manage what you don’t measure.” This is one of the most fundamental rules of the software world. I couldn’t allow this massive factory I built to fly blind. Therefore, I integrated Prometheus and Grafana, the world’s most popular open-source observability tools, into the system.

Prometheus is a time-series database that collects thousands of different metrics second by second—from the server’s CPU temperature and real-time network traffic spikes to the memory consumption of K3s pods and HTTP error codes on the Ingress. However, reading this raw data is difficult. This is where Grafana comes into play.

Grafana takes this complex data collected by Prometheus and transforms it into visually stunning, easily understandable real-time dashboards for me.

Thanks to this panel, I can analyze at a single glance how heavily the server is loaded, which application is consuming too much RAM, or if there is a bottleneck in the system. When the PactNote application eventually goes live and thousands of users enter the system, these indicators will be lifesavers.

7. Challenges Faced and Engineering Lessons

While setting up this infrastructure, not everything worked perfectly on the first try. One of the most interesting issues I encountered was a “Domain Collision” at the Ingress (Traffic Routing) layer.

Initially, both my default test application and the newly created “Landing Page” Ingress files were requesting the exact same domain (pactnote.com). Because Traefik didn’t know which room to direct a visitor at the door to (two different doors were opened for the same address), it locked up the routing. The solution was to get back to the basics of microservices architecture: Isolating traffic. I resolved the collision by moving my test application to the app.pactnote.com subdomain, dedicating the root domain entirely to the storefront page. Such problems serve as excellent practice for gaining a deeper understanding of Kubernetes’ declarative nature and its networking layer.

Conclusion: A Solid Foundation for a Grand Vision

Today, PactNote might seem like a project still in the ideation phase. But beneath the surface, a secure, self-managing, industry-standard (GitOps) infrastructure capable of handling any traffic load has already been built.

With this project, I didn’t just spin up a server; I designed the Software Development Life Cycle (SDLC) from scratch. I automated the entire process—from writing the code to deploying it, and finally, to monitoring it.

In my next post, I will talk about the architecture of the actual PactNote application that I will begin building on top of this massive infrastructure, the programming languages I will use, and the major problem this application will solve. Until then, keep enjoying autonomous systems and clean code!